Evaluation Process

Review a flow against guideline criteria, record answers, and save progress.

What the Evaluation Process Does

The evaluation process is where you review an existing flow against the guideline criteria you already defined. You are not creating the flow or writing new criteria here. Instead, you are applying the current guideline set to the flow, recording your answers, and letting UXit calculate the results from those responses.

In practice, most evaluators keep the design, mockup, prototype, or page they are reviewing open in another tab or window while they work through the criteria.

Evaluation Process covers the active review workflow after an evaluation has been created. To create, organize, and revisit evaluation records, use Evaluation Management.

Understanding the Evaluation Workspace

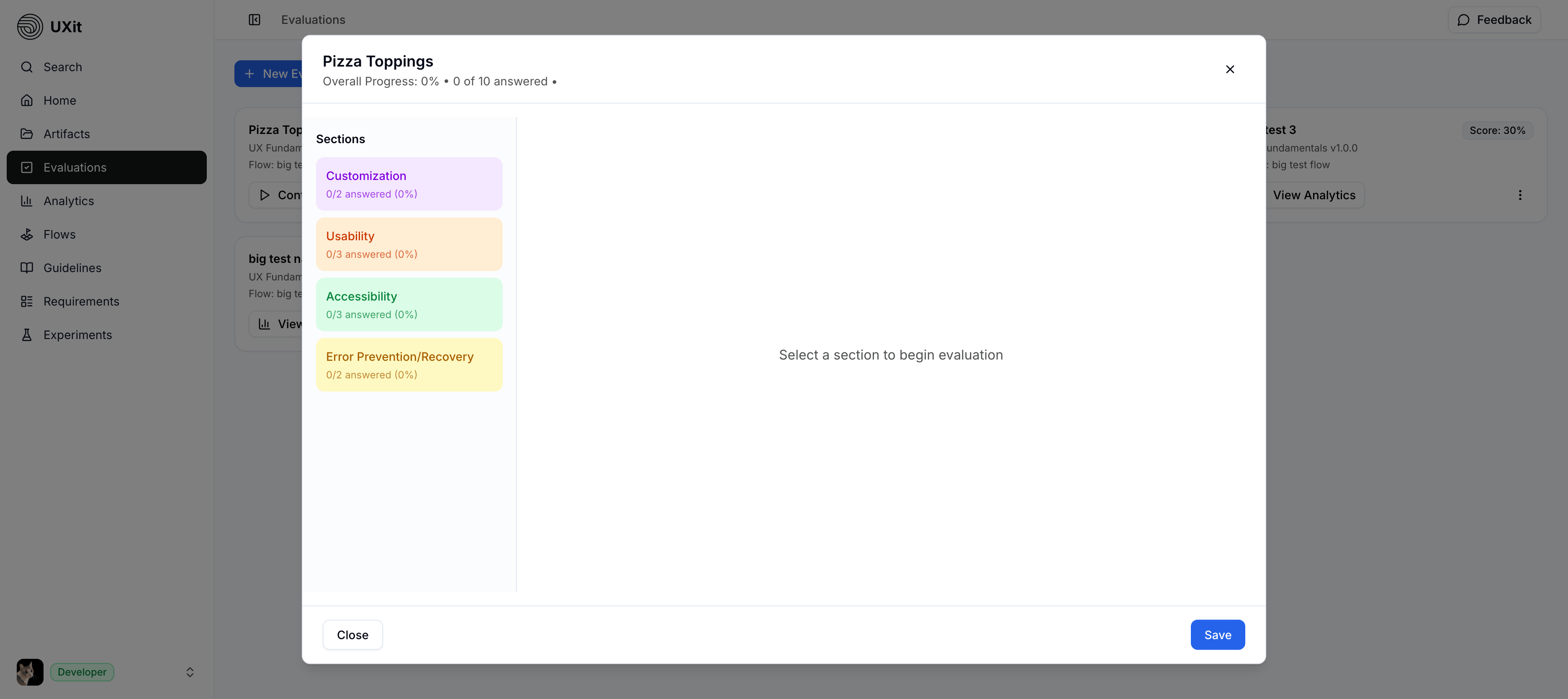

When an evaluation opens, you enter a dedicated review workspace with progress tracking, color-coded sections, and the selected flow's full guideline set.

At the top, the evaluation shows your overall progress and how many criteria have been answered so far.

On the left, each section represents a guideline category. The category colors come directly from your guideline category settings, so the same color choices carry through the app and help evaluators scan more quickly. If you later change a category color in Manage Categories, that update is reflected throughout the system.

Tip

Keep category names and colors consistent over time. Stable visual grouping makes evaluations easier to scan and makes analytics easier to interpret later.

Working Through Sections

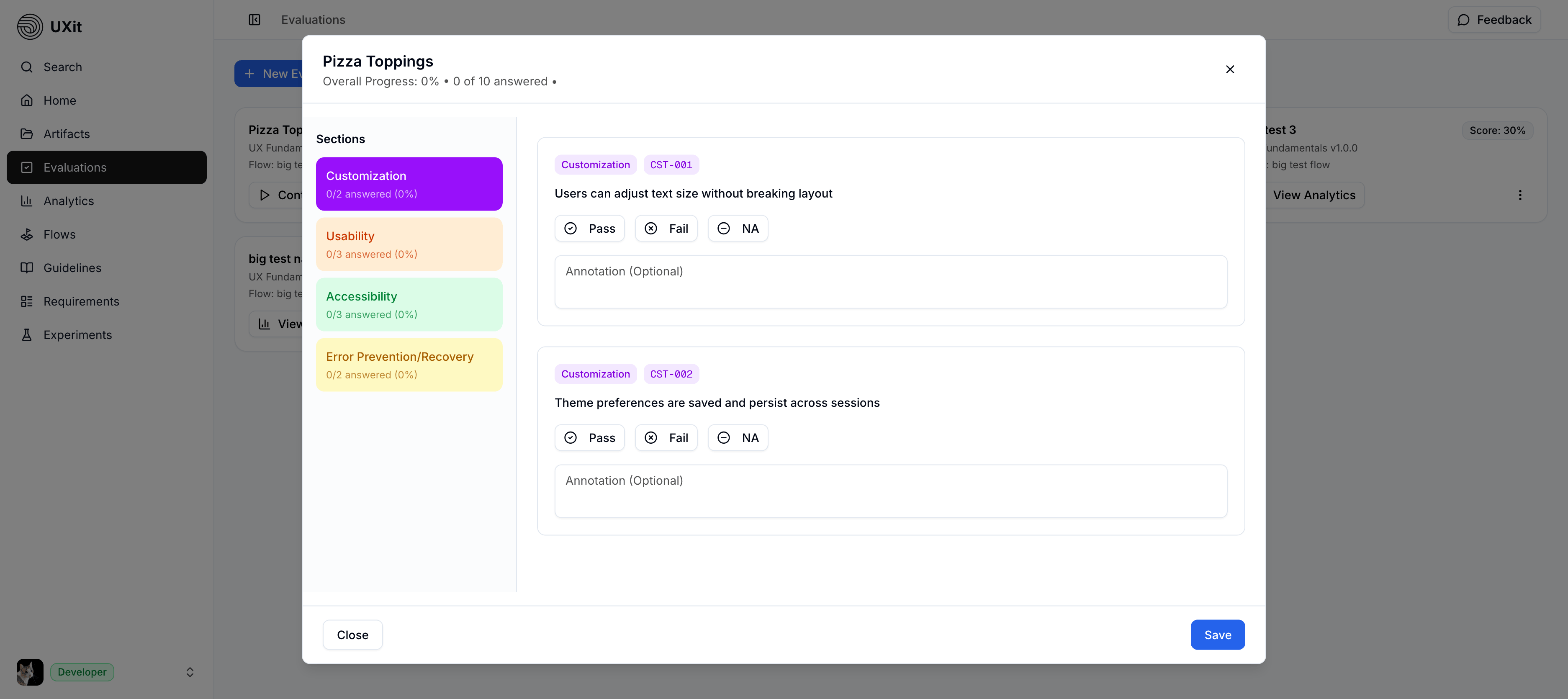

Select a section on the left to load that category's criteria into the review panel.

Category order affects the way evaluators move through the review. A logical order makes the process easier to follow, faster to repeat, and more consistent across versions.

Each criterion includes:

- Category label: The category the criterion belongs to.

- Criterion ID: A short reference for handoff and review.

- Criterion text: The rule being evaluated.

- Response controls:

Pass,Fail, orN/A. - Annotation field: Optional plain-text notes.

Criterion IDs are especially useful in handoff, tickets, and follow-up review conversations because they give teams a short reference for a specific issue.

Answering Questions

Once a section is selected, answer each criterion based on the flow or design you are reviewing.

Use the responses this way:

- Pass - The design meets the criterion.

- Fail - The design does not meet the criterion.

- N/A - The criterion does not apply to this version of the flow and is excluded from the scoring math.

Only Pass and Fail affect scoring. N/A is excluded from the final calculation.

Using Annotations

Each criterion includes an annotation field for plain-text notes. Use it to capture context while you are evaluating.

Annotations are especially helpful when a criterion fails because of one specific element inside a larger page or flow. For example, a typography criterion may fail because only one paragraph uses the wrong size, or a button criterion may fail because only one button in a group is incorrect. The criterion still fails overall, but the annotation helps identify the exact paragraph, button, or location that needs attention.

Good annotations are specific and location-aware. For example:

Second paragraph in the left column uses the wrong font sizeDelete button in the bottom-right action group has insufficient contrastConfirmation modal title wraps awkwardly on smaller screens

These notes are saved with the evaluation and can support later review, analysis, and handoff.

N/A vs Exclude Condition

N/A and Exclude Condition solve similar problems at different stages.

- Use Exclude Condition in the guideline editor when you already know a criterion should not appear in the next evaluation.

- Use N/A during the evaluation when the criterion appears, but turns out not to apply to this specific version of the flow.

Example

Suppose a criterion says All badges use this font size, but the newer version of the same flow no longer uses badges. That should be marked N/A, not Pass or Fail, so the criterion is excluded from the math instead of incorrectly helping or hurting the score.

Saving Progress

Evaluations do not autosave. Use the Save button to keep your work.

You do not have to finish everything in one sitting. You can save your progress, leave the evaluation, and return later to continue.

Save Required

There is no autosave in the evaluation workspace. If you want to keep your current answers or annotations, click Save before leaving.

Completion Rules

Every criterion must be answered with Pass, Fail, or N/A before the evaluation can be processed.

If even one criterion is left unanswered, the evaluation cannot be fully processed. This keeps the results complete and ensures the scoring logic has a decision for every included item.

Complete the evaluation carefully. Once it is submitted as final, the answers are treated as locked rather than something you go back and revise later.

Best Practices

- Keep the design or prototype open in another tab or window while evaluating.

- Work section by section so progress is easier to track.

- Use annotations whenever a failure needs extra context for handoff.

- Use

N/Aonly when a criterion truly does not apply to the evaluated version of the flow. - Save regularly since the workspace does not autosave.

Coming Soon

Image and video annotation support will be added to this evaluation interface in a future update. For now, use text annotations to capture location-specific context while reviewing.